March 4, 2012

Are teams benefiting from relievers pitching less? A visual analysis.

If you love baseball and particularly if you love baseball stats, you need to follow FanGraphs. The depth of the analysis is simple incredible, but one of the things I find lacking is visual analysis. There are often tables and some rudimentary charts, but I think the writing could be enhanced by adding some viz to the terrific explanations of the numbers.

Recently they wrote about the use of relief pitchers in Major League Baseball and whether adding depth to the bullpen resulted in a strong ROI. In this post, I’m going to quote directly from the article, but all of the charts and graphs that supplement the words were created by me.

All of the data that I used can be found here and the Tableau workbook I used to created the charts can be found here.

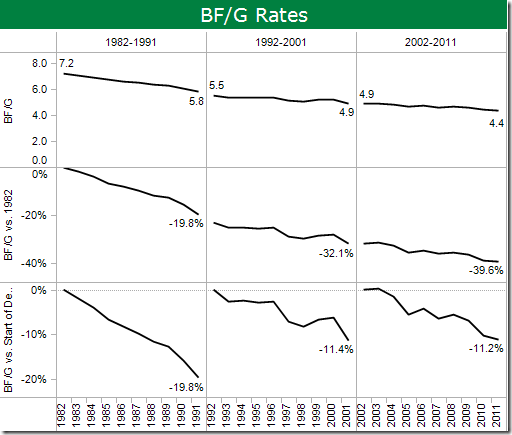

Batters Faced per Game

“The change in bullpen usage is the biggest difference in the sport now compared to 30 years ago.”

“Despite the fact that modern bullpen roles have been well established for quite a while, the dwindling rate of batters faced per appearance shows no signs of slowing down. While the drop from 1982-1991 was the most extreme, the last two decades have each seen the league shed an additional half a batter per reliever appearance, and given that we’ve seen teams now expand to carrying 13 pitchers at times, there seems to be no end in sight to this trend.”

The article only provides a table and if the writer did not include the analysis in words, there’s no way anyone would have ever been able to identify this trend by scanning their eyes across a list of number.

The chart above in broken down by decade by year and includes three methods for analyzing batters faced per game (BF/G).

- BF/G (top lines) – This is simply a trend of batters faced per game over the last 30 years. As the writer points out, the drop in the first decade is the most extreme (1.6 BF/G decline), but the last two decades have each declined more than a half batter (0.6 and 0.5 BF/G respectively).

- BF/G vs. 1982 (middle lines) – I wanted to understand how drastically the number of batters faced per relief appearance has really changed from 30 yrs ago. The numbers and trend are truly staggering. 19.8% decline by 1991 and additional 12.3% decline by 2001 and another 7.5% decline through 2011. That all adds up to an almost 40% decline.

- BF/G vs. Start of Decade (bottom lines) – This is similar to #2 except the calculation “resets” each decade. The idea here is to measure how much the BF/G rate has changed within the decade. If the –11% trend from the last two decades continues, you can expect relief pitchers to be facing less than four batters per appearance by 2021. So basically, every pitcher would be treated like a closer.

Wow! Bullpen strategy sure has changed!

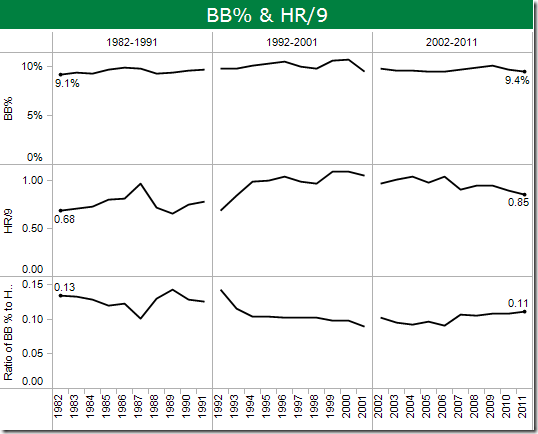

Walk and Home Run Rates

“With pitchers facing fewer batters, you’d expect them to be able to throw harder and exploit platoon advantages for better results overall. The trade-off should be more quality for less quantity.”

“Looking at the numbers, we don’t really see much evidence that the modern bullpen has helped relievers perform better at all.”

- “Over the last thirty years, walk rates by relievers are essentially unchanged. They went up a bit when the home run barrage took over the late-1990s, but have gone back down as home runs have become less common.” (top two lines)

- “The ratio of walks to home runs is pretty steady and consistent over the last thirty years, and there’s certainly no evidence that the modern day bullpen has helped pitchers avoid the base on balls.” (bottom lines)

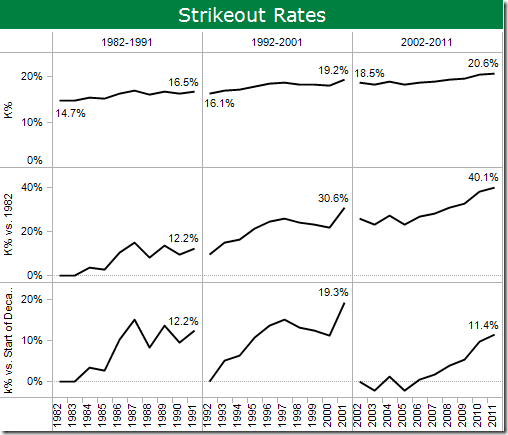

Strikeout Rates

“On the other hand, strikeout rate has skyrocketed, increasing by 40% since 1982. This would seem to support the idea that relievers can be more effective in shorter stints, and that playing the match-ups can help prevent run scoring.”

I have broken down the strikeout rates similarly to the BF/G rates.

- K% (top lines) – This is simply a trend of strikeout percentage over the last 30 years. K% has been on a steady increase of about 2-3% over each of the last three decades.

- K% vs. 1982 (middle lines) – As the writer noted, the strikeout rate has increased 40% since 1982, with the biggest increase from 1992-2001 of 18.4%. But he also goes on to explain this:

“While (starting pitchers’) strikeout rate has been raising at the same time that the modern bullpen has been evolving, this seems to be a case where correlation is not causation. If starters are seeing the same rise in strikeout rate, that points to a more fundamental shift among hitters – more sluggers swinging for the fences, the rise in acceptance of the strikeout as just another out among organizations – rather than a specific benefit being given to relievers from their new roles.”

- K% vs. Start of Decade (bottom lines) – Again, this is similar to #2 except the calculation “resets” each decade. This provides stronger evidence of the “swinging for the fences” effect of the late-1990s; strikeout rates increased 19% from 1992-2001.

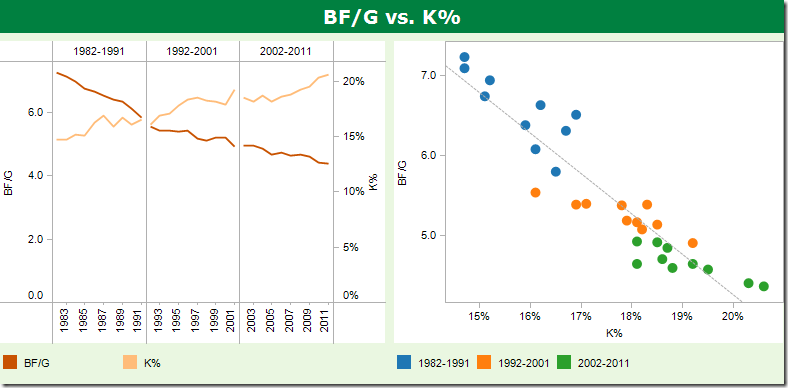

BF/G vs. K%

We’ve seen the write discuss BF/G and K% rates, but do these two have a relationship? When I look at relationships between two stats, I like to look at them to ways: (1) a dual axis line chart and (2) a scatter plot.

The strikeout rate for relievers is clearly correlated to batters faced per appearance. As BF/G goes down, K% goes up. This is clear and easy to understand in both of these charts. This would have been a nice nugget for the writer to include.

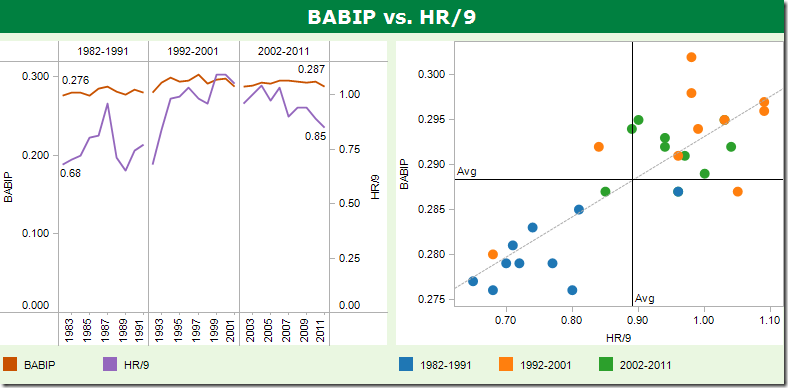

BABIP and HR Rates

“Likewise, it doesn’t appear that relievers are really generating much of a benefit when hitters do put the bat on the ball.”

The write makes a few notes about the stats, but I don’t agree completely.

- “Home run rates have risen at a similar rate as what starting pitchers have experienced.” Ok, nothing to argue with here. I have to take his word for it since I don’t have the data for starting pitchers.

- “Batting average on balls in play has increased significantly over the years.” I subtly disagree here. BABIP has only increased 11 points or 4%. Is that significant? I don’t think so.

Let’s extend the analysis a bit farther. Let’s look again at the relationship between the two stats to find correlations.

When looking at the two lines together, there isn’t a clear correlation, like the obvious inverse relationship between BF/G and K%. But what is interesting is when you plot them on a scatter plot. I added the averages across all 30 years to each axis to make a nice quadrant chart. The R-Squared is only 0.688, but what sticks out to me is how, for the most part, the years within each decade cluster together nicely (for the most part).

- Nine of ten years from 1982-1991 were below average for both BABIP and HR/9, with the tenth year also below the average BABIP.

- Eight of ten years from 1992-2001 were above the average BABIP with seven of those years also above the average HR/9 (remember, there was a significant increase in HRs in the late-1990s). Note how much higher the BABIP and HR/9 rates are for the years above average.

- From 2002-2011, nine of ten years were above average for both BABIP and HR/9, but not nearly as far above average as 1992-2011. Notably, the HR/9 rate fell from 1.03 in 2006 to 0.85 in 2011, a 17.5% decrease (this can be seen in the line chart).

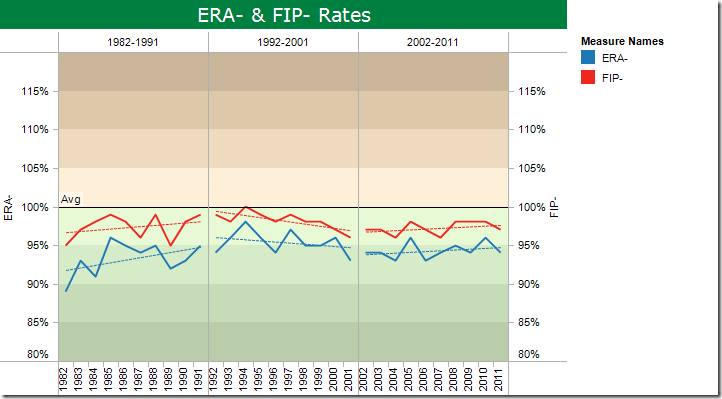

ERA- and FIP-

“If you look at (ERA- and FIP-), there’s just no evidence that bullpens are preventing runs at a better rate now than they were before the current roster construction norms came along. Any improvements in quality of performance by the elite relievers have been offset by the fact that more innings are now being given to inferior arms, so the trade-off has essentially resulted in a change of no real benefit.”

If you truly trust the reader, then you’re only choice is to take him at face value here. Me though, I like to “see” the data. I’ve done a couple things here to quantify the data, but first, two notes.

- For ERA- and FIP-, values below 100% are better than the league average. The lower the number, the better. Think of them like an index. If the ERA- is 95%, then that means it’s 5% better than the league average.

- Notice that the axes range from 80-120%. This was done to emphasize the lack of significant year to year variances.

For this particular chart I have:

- Added a reference line at 100% to remind us that this is the average

- Synchronized the axes so that you can see how ERA- and FIP- compare to each other

- Added color bands below and above the average to indicate levels of goodness and badness. That is, the darker the green, the better and the dark the tan, the worse.

Now, after having “seen” the data, I agree with the writer that “there’s just no evidence that bullpens are preventing runs at a better rate now than they were before the current roster construction norms came along.”

So what do you think? Does these charts and graphs make it easier to interpret the stats? Do they help tell the story more effectively?

Nice job. Your graphics improve the original article by letting the statistics show the trends.

ReplyDeleteMy problem with these fan sites is that any graphics are way overdone, the chart types are those generally frowned upon by serious charting authorities, and the formatting is atrocious. When I've suggested alternatives, I'd be told "Yeah, your chart is good, but I like all the colors in mine."

Andy - Great work as usual !! This inspires me to finish my Cricket Dashboard !

ReplyDeleteJon - I agree, we have the same issue @ work also. People tend to get distracted by pretty colors and want that instead of the actual point behind the chart