December 23, 2013

Tableau Tip: Using actions to “reset” a chart to the most recent date

A great Tableau question came up at Facebook the other day. Often times our users are especially interested in the most recent daily data, but like to have the freedom to view historical details on demand as well. Trend lines are generally good at telling the historical story, but don’t always provide all of the details that users want. Wouldn’t it be cool to use a trend line to filter the specific components of a dashboard to any historical data point, but then be able to immediately revert to the most recent date on demand?

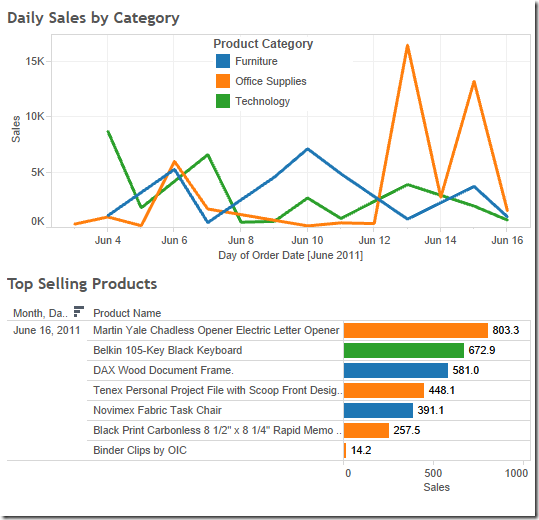

Suppose you have a simple dashboard composed of two sheets:

- A trend line that shows daily sales across product category

- A horizontal bar chart that shows the top selling products.

Now say we want the end user to select a mark on the trend line and have the bar chart update to a list of products within that respective product category and show sales for only that day. Tableau makes this very straightforward through the use of a filter action. However, what if the user unselects that mark on the trend line and we want the bar chart to revert back to only reflecting sales data for the most recent date of data? Without some extra manipulation and a couple table calculations, Tableau’s default behavior would be to clear all actions and show product sales for all dates in the partition, rather than only the most recent one. In other words, there are generally two things we want here:

- The bar chart should only reflect the most recent day of data by default (in this case June 16th).

- When a mark on the trend line is selected, the bar chart should dynamically filter to that respective day of data. However, if that selection is cleared, or if that mark on the trend line is unselected, we want the bar chart to revert back to only reflecting sales data for the most recent date (June 16th).

Using the Superstore Sales Excel data, drag Sales to the Rows Shelf, Product Category to the Color Shelf, and right-click drag and drop Order Date to the Columns Shelf, choosing continuous days.

Also, we’ll need a date filter so that Tableau only shows the most recent two weeks in the view to simplify things. Drag Order Date to the Filters Shelf, choose “Individual dates,” go to the “Top” tab, and choose Top 14 by Minimum Order Date. This filters the partition to the most recent two weeks of data.

Let’s make this a global filter by right-clicking on the date field from the Filters Shelf and choose “Apply to Worksheets,” and “All Using this Data Source.” Now every viz we make using this data source will only reflect the most recent two weeks of data.

Cool, so far so good. Nothing tricky here. Now on to the bar chart. Drag Sales to the Columns Shelf, Product Name to the Rows Shelf, and sort descending by the sum of Sales. I like to add labels on the end of each bar by selecting the “Abc” icon on the toolbar as well. We can also add Product Category to the Color Shelf so that it matches the color scheme in trend line:

Note that view is showing the top products by sales within the last two weeks cumulatively. In order to satisfy #1 of our requirements, our users only want to only see data for the most recent day by default, rather than the most recent two weeks as a whole. This can be done in the dashboard by creating a filter action and selecting a mark on the trend line for the most recent day, but clearing the selection would just show cumulative two week sales again, which is not what we’re looking for. In order to get around this, we can add discrete days to the view, and use the INDEX() function to rank products within each discrete day of data. This will essentially allow Tableau to segment a list of top products within each day.

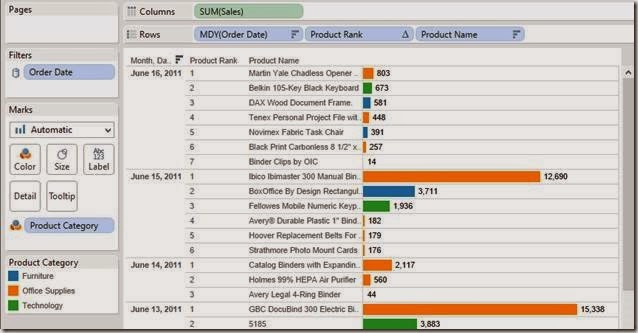

NOTE: Since this is being done in version 8.0.x, I’m using the INDEX() function to get what we need here. Version 8.1 introduced some enhanced ranking functionality with the RANK() function, but the general framework behind this between v8.0 and v8.1 should be very similar.Right-click drag and drop Order Date to the left of Product Name on the Rows Shelf and choose the discrete MDY option. Right-click on this field and choose Sort, then choose Descending in data source order. This places the most recent day of data on the top of the list in the view. You should have something like this:

Now we need to use a table calculation to sort products within each day, rather than on the aggregate over all days. Create a calculated field, name it Product Rank, and use the index function in the Formula box.

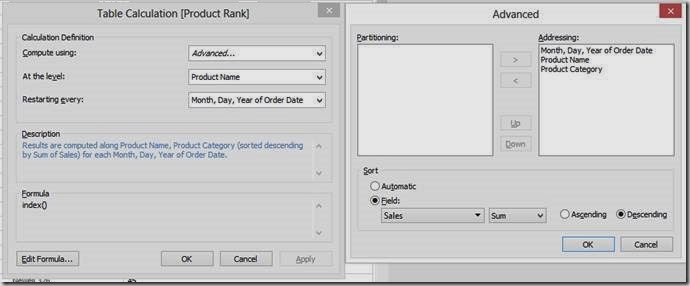

Right click on this newly created calculated field from the measures list in the data window and choose “Convert to Discrete.” Then, drag and drop this between the Order Date and Product Name on the Rows Shelf. Things will look somewhat funky, but we’ll fix this by right-clicking on the Product Rank field on the Rows Shelf and choosing “Edit Table Calculation…” Choose Compute using > Advanced. We want the table calculation to be addressed along Month, Day, Year of Order Date, Product Name, and Product Category in that order, and sorted descending by the sum of Sales. Then select At the level: > Product Name and Restarting Every: > Month, Day, Year of Order Date. Here’s the snapshot of the dialog boxes:

The sheet should now have products correctly sorted in descending order by the sum of sales within each discrete day.

Finally, we need to do this one more time for dates. However, we’ll use the index function differently this time. Since Order Date is already sorted, we don’t necessarily need the INDEX() function strictly to sort stuff. We can instead leverage the more formal ranking to dynamically filter only the top-ranked date. This is different from directly filtering the date field because that would not allow this sheet to interact with other sheets in the dashboard based on Order Date in the dynamic sense that we’re looking for. The INDEX() ranking allows Tableau to make two passes on the partition, therefore telling Tableau to aggregate sales for the past 14 days, but still giving us the ability to only view details for one date at a time dynamically and interactively.

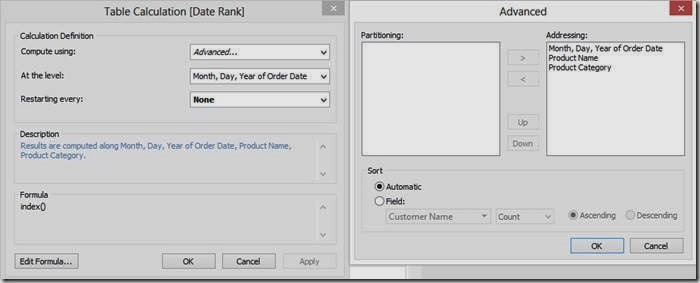

To create the second calculated field, right-click on Product Rank from the measures list and choose “Duplicate.” Rename it “Date Rank.” Drag this to the left of MDY (Order Date) on the Rows Shelf. We want to choose some advanced computation settings for this as well, so go to “Edit Table Calculation,” and edit the “Compute Using” settings so that the table calculation addresses Order Date, Product Name, and Product Category respectively. Then we want the results to be computed along Order Date so we can assign a rank to each discrete day in the view.

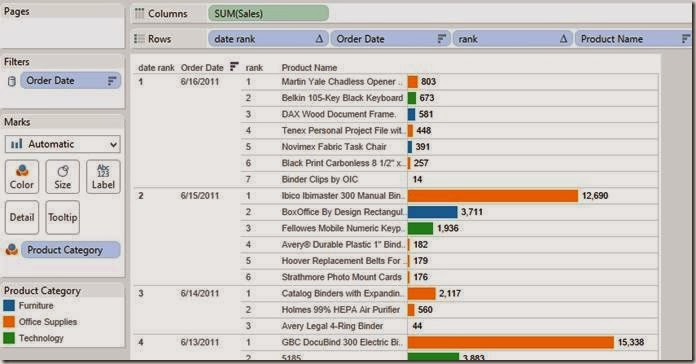

This should give us a list of products ranked in descending order by sales within each day, which is also assigned a rank in descending data source order.

Now all we need to do is filter the viz so that it only shows the #1 ranked date, which is also the most recent day of data by default. Double-click on the Date Rank field on the Rows Shelf, and edit the selection so that only #1 is chosen. This is super important because, by filtering the Date Rank, we’ve just completed both #1 and #2 of our requirements for this dashboard. Not only is the most recent date now always going to be displayed by default, but we can also filter to other dates and clear the filter to revert back to the most recent.

All that’s needed now is some clean-up and actually building the dashboard. To hide some of the redundant stuff, right click on the Date Rank field on the Rows Shelf and uncheck Show Header, and do the same for the Product Rank field on the Rows Shelf as well. We’ll leave the date field included to ensure things are working correctly.

To make the dashboard, arrange the sheets to look something like this:

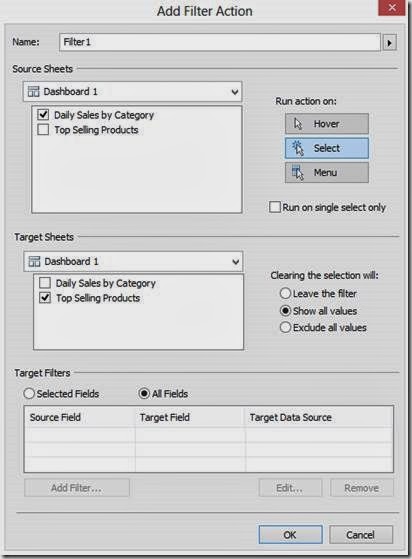

Finally, all we have to do is create the filter action and we’ll be good to go.

We now have a dashboard that only reflects product sales for the most recent day of data in the bar chart, and after interacting with the trend line, the bar chart will always default back to this day as well. This is a pretty cool functionality to have because often our users only care about what is happening today, but like to have the ability to look back at a snapshot in time to view more specific data that otherwise couldn’t be seen in the trend line.

Check it out. Play with it yourself below. Notice how the bar chart defaults to the last date. Click on a dot on the line chart, for example June 10th. Notice how the bar chart updates to reflect Jun 10th. Now deselect that point and the bar chart goes back to the latest date.

December 4, 2013

Tableau tip: Don’t waste the ends of your sparklines…make them actionable!

Sparklines are one of my favorite chart types to include in dashboards, yet I see many people using them without providing enough context. Some people like to add bandlines, some like to add sets of dots, some like to add text, all in an effort to add meaning to sparklines. These are perfectly fine, but I think there’s a better way to make sparklines actionable. Is this the best way? Maybe not, but it is an alternative worth considering.

Sparklines were first introduced by Edward Tufte in his book Beautiful Evidence. Tufte says: “A sparkline is a small intense, simple, word-sized graphic with typographic resolution.” Stephen Few expands Tufte’s definition in his book Information Dashboard Design: “Their whole purpose is to provide a quick sense of historical context to enrich the meaning of the measure. This is exactly what’s required in a dashboard.”

When someone is creating a dashboard, they should provide as much information and meaning as possible to make the information actionable. I don’t see any examples from Tufte, Few or Jim Wahl that provide much meaningful context to the end of a sparkline.

Tufte provides some examples:

He might add a red dot to the end of the line along with some text to highlight the latest value.

While it’s a bit tough to see in this next example, Tufte has used red dots for the beginning and ends of the lines and blue dots to indicate the highest and lowest values.

It’s important to also note how Tufte always includes the values associated with all of the highlighted dots.

There are tons and tons of examples of how Stephen Few uses sparklines. Consider this example from his whitepaper Dashboard Design for Real-Time Situation Awareness.

Few says: “Meaningful context has been added to these metrics in the form of sparklines, which provide a quick sense of the history that has led up to the present.” This small section of a dashboard is a classic Few design. You’ll often see him use (1) sparklines, (2) a visual indicator of health (the red dots in this case), and (3) bullet charts closely together.

When I use sparklines, I like to combine all of the elements of Tufte and Few designs. Let’s look at an example.

On the left you see the sparklines, but notice that I use the dot on the end of the line as an indicator to take action. Tufte uses the dot one the end to indicate you’re at the end. Does that make it actionable? Not necessarily. Few separates the indicator into its own space and does not mark the end of the sparkline. My version saves space, increases the data-to-ink ratio, and provides a visual indicator to the reader in one chart.

The table to the right summarizes the sparkline, pulling from Tufte’s practices. In this example, I’m concerned with comparingthe last two 7-day periods. Notice how I used conditional formatting so that the dot on the end of the line is the same color as the text in the WoW and WoW% columns. I don’t use bullet graphs because I feel that the text itself is sufficient; I don’t want to add a graph for the sake of having a graph for everything.

Simple, concise, actionable…all things you want in a dashboard. Keep reading to see how I built these sparklines in Tableau.

Step 1: The date calculations I use in the example below are simple and efficient when you include a Max Date field in your data source. Creating Max Date as a calculated field directly in Tableau won’t always work since you need the Max Date at the row level. In this example, I’ve switched the Superstore Sales data source to Custom SQL and added a subquery to include the Max Date at the row level.

TIP: If you have a large dataset, Tableau will run more efficient queries if you push the custom SQL into a view in your database. Tableau wraps its own SQL around the custom SQL, which can get quite messy and inefficient. Creating a view will simply the query Tableau runs and improve performance.

Step 2: I like my sparklines to show the last 30 days, so I need to include a date filter. I include my date filter as the first step so that my data set is smaller to work with from the outset.

A Boolean calculation works well here. Notice how it leverages the Max Date field. This wouldn’t work if the Max Date was a calculated field inside of Tableau.

To get my sparklines to look how I like them, the column and row shelves will need to look like this. Let’s break the worksheet down into its pieces.

Step 3: Create a dummy header and place it on the columns shelf. Place it on the Columns shelf and hide the fields labels for the columns.

Step 4: Right-click-drag Order Date to the columns shelf and choose the first option, Order Date (Continuous). Notice how it only shows the last 30 days.

Step 5: Right-click on the Order Date pill and uncheck Show Header. This hides the date axis.

Step 6: Drag Category on the Rows shelf and hide the headers. The headers aren’t needed since they’re on the left side of the table; there’s no need to repeat them.

Step 7: Place Sales on the Rows shelf to the right of Category. This gives us the lines. Make them thinner, change the color to dark gray, and resize the chart to make them look like sparklines.

Step 8: Double-click on the Sales axis to bring up the axis options. Uncheck Include Zero and choose Independent axis ranges for each row or column. This gives us the view that best fits the space. Few talks about the scaling options for sparklines in Chapter 10 of Information Dashboard Design.

Step 9: We need a calculated field to show a dot on the end of the line. You might be tempted to simply turn on the line ends, but that won’t do the trick because you can’t color the line ends only. The calculated field should only capture sales for the last day. This is where our Max Date field comes in handy again.

Step 10: Drag the new Last Day Sales field onto the Rows shelf to the right of the Sales pill. Right-click on the Last Day Sales pill and choose Dual Axis. Right-click on the scale for Last Day Sales and choose Synchronize Axis. Right-click on Sales pill and uncheck Show Header.

We’re almost done. All we need to do now is color the dot.

Step 11: I like my dots to be colored by the week over week change. This requires me to create several calculated fields. You could combine all of these calculated fields into a single calculation, but I like separating the parts of the calculation to make it easier to understand and so that each calculation is reusable.

Create all of these calculated fields in this order (special thanks to Joe Mako for helping me get these calculations working and showing me why they’re more efficient than what I had been doing):

- Last 7 Day Sales:

IF [Order Date] >= DATEADD('day', -6, [Max Date]) THEN [Sales]

END - Prior 7 Day Sales:

IF [Order Date] >= DATEADD('day', -13, [Max Date]) AND [Order Date] <= DATEADD('day', -7, [Max Date]) THEN [Sales]

END - Total Sales - Last 7 Days:

IIF(LAST()=0, RUNNING_SUM(SUM([Last 7 Day Sales])), null) - Total Sales - Prior 7 Days:

IIF(LAST()=0, RUNNING_SUM(SUM([Prior 7 Day Sales])), null) - WoW (week over week):

[Total Sales - Last 7 Days] - [Total Sales - Prior 7 Days] - WoW %:

[WoW]/[Total Sales - Prior 7 Days]

Step 13: Right-click on the WoW % pill, go down to Compute Using and choose Order Date.

Step 14: Double-click on the color legend and change the settings to something like these:

You might need to do a bit more formatting to get your viz just the way you want it, but in the end, you’ll want it to look something to this:

Notice that I keep the row banding. I like to include banding on both the sparkline chart and the table so that the reader’s eyes go across the dashboard.

This might seem like a lot of steps, but once you do it a couple of times, it’s pretty quick; you’ll be able to do this in only a couple of minutes.

Building the table is super simple now that you have all of the calculations (this is why I create all of them individually). Download the workbook here to see how all of this was built.

November 30, 2013

November 18, 2013

VizCup Champ–Eric Rynerson: Seeing Red: Home Crowds Bay for Blood; Referees Bend

First I’d like to say that it was a great experience participating in the competition, the other entries were impressive and I was honored to have won.

I had a few reflections on the ride home; I was going to write these down to share with my colleagues back at InsideTrack (where we use Tableau and other tools to explore the impact of our coaching program on student engagement and persistence) so when Andy offered me a guest post here on VizWiz I thought it might be helpful to share them more widely. Below is my viz, and following it are “Five Things I Learned from the Facebook Viz Cup”.

The dashboard answers three questions:

- Do referees punish the visiting team more than the home team? (Definitely. Hover over the regression line to see the coefficient: Way higher than 1.0, indicating more punishment for the visiting team. As I note, the yellow card bias is weaker, suggesting it’s not just gameplay factors)

- Are some referees a lot more or less biased? (Yes, though only a few really look like outliers)

- Do they gain the confidence and maturity to ignore the home crowd’s pressure as they gain experience? (Here’s where I made it interactive- click around in the bubble chart and look at each ref’s personal history on the right; I think you’ll agree that generally they do not get any better)

- Bonus: What does each one look like? Click on a ref in the bubble chart to see images of them from the web in the lower section of the dashboard

Five Things I Learned from the Facebook Viz Cup

1) Failure is okay- just do it fast, and don’t give up

Sometime after midnight on Tuesday, the first of just two evenings I would have to explore the data before the competition, it occurred to me that I was failing.

My plan was to investigate whether local rivalries in soccer really do produce unpredictable results (as the cliché goes, “in the derby the form book goes out the window”), and I really wanted to find the answer. However, whatever I did to get the final output I’d have to repeat on-site in under an hour and then explain it all in 90 seconds to three judges and a room full of people…including a required interactive component.

The problems were multiplying: To answer the question directly I needed to transform the data or compensate with a lot of calculated fields (which would eat up precious minutes Thursday evening), there just weren’t enough crosstown rivalries for consistent patterns to emerge, I kept thinking of variables I needed to take into account that were hard to get or complicated to explain, and so on.

I honestly started to wonder what gave me the idea I had any business driving down to Facebook Headquarters to compete in a data visualization contest. I started to imagine computer science PhDs blowing away the audience with 3D network graphs that respond to a clicked tweetID with a dazzling cascade of retweets the tune of spacey New Age music. Upon later reflection I suspect it takes longer than an hour to code up something like that, but it was after midnight and my confidence was wavering.

I decided that as long as I avoided embarrassing myself it would be worth the trip just to learn what everyone else did with the same datasets, how they did it, what tools they used and how they learned them. So I vowed that I would revisit the question briefly at lunchtime and then most likely start over with a clean slate on Wednesday evening, perhaps looking at red card distribution. Thank goodness I did! I’m not sure I really “failed fast”, or fast enough anyway, but at least I didn’t give up when my wheels were spinning.

2) Play to your strengths

I was quite excited to see the Premier League dataset among the seven options. I’ve been following Barcelona since 2006-7, Arsenal since roughly 2009-10, and the Seattle Sounders since they joined the MLS in 2009, so coming up with interesting questions to explore was easy. For example, if you only have 45 minutes to spare for a game should you watch the first or the second half? Does more offensive pressure (shots off target, shots on target, corners, etc.) lead to more goals? Do all the player trades teams make in January actually change their trajectories in the second half of the season?

I’d like to believe I would have found compelling stories in the other datasets provided, but I think having a hunch as to where to look for interesting findings helped a lot given the time constraint. I imagine that was also a factor for the participants who work routinely with social media or location data (and built from those datasets)- in fact some of them made comments to that end.

3) Focus on the story

There were some great entries that didn’t get much recognition despite solid functionality and depth, and I think it’s because their presenters emphasized what the user can do (click here to go there, select here to filter that) rather than why they should do it- or missed the opportunity to pick one storyline and follow it to its conclusion using the dashboard.

It’s tempting to leave it to the audience to draw their own conclusions, especially for analytical people (who tend to make claims cautiously, as they fear being wrong even more than they love being right). The problem is that people don’t engage with neutral information the way they engage with an argument- so make one! You can use interactivity to give them a chance to undermine your thesis, or figure out when it works and when it doesn’t, or whatever. But give them something to react to.

By the way, I think this extends beyond static analyses- the best live reports are the ones designed by people who begin by thinking about what questions the report is supposed to answer. You might be interested enough in a report promising “measure X by dimensions A and B”, but you’d be more interested in seeing “last week’s performance leaders and laggards” or “current pipeline bottlenecks”.

4) Keep it simple

Ninety seconds is a very short time. Having to present so quickly was actually a bigger constraint than having an hour to put the viz together: I kept having to remind myself this was not an analysis competition, and that I would only have time to convey two or three points to the audience anyway. So I needed to keep the question simple and the answer simple as well. This is hard for analytical people- we like to think of all the potential holes someone could poke in our argument and fill them with supporting analyses.

Fortunately I had taken some inspiration from watching Ryan Sleeper win the Iron Viz competition at this year’s Tableau conference. Instead of going for analytical depth (I should mention here he had only twenty minutes to build something), his viz answered a simple question and it was very clear how the user was supposed to interact with it. I pulled it up several times as I was iterating toward my final version and the example helped me decide to leave several things out of the main dashboard.

For example, I almost worked in another graph comparing yellow card bias- instead I just included a note and a verbal mention during the presentation. I also wanted to add a section with the list of games in which the selected ref had handed out red cards, as well as another section driven by that list which provided Google News results for the game you clicked on so you could investigate whether the cards were controversial. I was pretty certain this would be awesome, but in the end I worried that all the extra content would make the dashboard look like someone’s personal website from the 1990s (too many frames and scrollbars). I suspect the clutter would have cost me.

5) People love pictures

I usually focus on findings and next steps in my day-to-day communication and don’t often take formatting beyond “professional-looking”, so the other thing that struck me at this year’s Iron Viz competition was how much a couple relevant images from the web or some thematic formatting could add to the viewer experience.

That was part of my inspiration for spending about a third of my prep time Wednesday evening just on the portion of the dashboard that pulls each referee’s images from the web: Trying different sites, reading about the variables in Google’s URLs and experimenting with them, seeing which search terms were most reliable, making sure the user doesn’t have to scroll, etc. It was totally worth the time.

A gif here and there made a lot of the dashboards more fun- like Mike Evans’ alien with the peace sign- though my favorite was the cartoon steer being abducted via tractor beam (bunchball’s dashboard).

Lastly, the verbal picture can be just as impactful. Since most of the audience had likely never attended a game in a soccer-mad country (England, Argentina, the Pacific Northwest…) I related the referee’s plight for them when introducing the questions I was answering. It was something along the lines of: “Imagine having to make a hugely consequential decision in a split second… now imagine 70,000 people screaming at you to make one particular choice. And knowing that they might follow you to your car if they don’t like your decision. You can imagine that it might be hard to stay completely objective!”

So that’s what I learned. Drop me a line if it was helpful; I’d love to hear from you.

November 15, 2013

VizCup roundup–2nd place: Mike Evans, UFO Sightings on Your Birthday

This is a guest post from Mike Evans, the second place finisher at the Facebook VizCup. I love the clean design and the way that it draws you in the play with it. Many of us would not have been surprised if this was the winner. And the fact that Mike flew up from LA just for this event…wow! Truly inspirational!

Panic. It was late. The night before the first-ever VizCup at Facebook Headquarters. I needed a phenomenal data visualization that I could reproduce from scratch on site within a 60 minute time limit. The data set on UFO sightings I’d been poring over revealed no compelling story. I’d come up all the way from Orange County to compete and if inspiration didn’t strike soon, I was going to be embarrassed. I switched to a soccer data set. I know very little about soccer, but I’m generally a sports fan. I quickly had a semi-interesting question. “Can your team pull off a comeback in the Premier League when losing at halftime?” The visual looked ok.

Something wasn’t right though. Do soccer fans call it “halftime”? When I’m presenting this should I actually call it soccer or football? The other thing I didn’t like was that what I really wanted to answer was how likely a team could win when coming from behind at different points in the game. That couldn’t be answered from the data set. Plus I’d only have an hour during the competition to build a great viz and I already had to do a bunch of reshaping of the soccer data to answer this question. Reshaping was time consuming and increased my chances of a making a mistake or encountering a technical issue.

So I switched back to the UFO data. Stupid UFO data. Maybe there was a relationship between “The X-Files" episode airing dates in the 1990s and UFO sightings? Did the higher rated episodes correlate to higher sightings that night? That was a dead end. Stupid, stupid UFO data. A little while later I’d plotted out the average delay in reporting UFOs by region. Turns out that some UFO sightings were reported many decades after they were seen, and different states had different averages. Yawn. I grew bored and took a break. I started wondering if there might be a UFO sighting on my birthday on Jan 16, 1977. Turns out there wasn’t. Hmmm, well what about any sightings on Jan 16 of any year? Cool, there were a bunch. Is that a high number compared to other days? I wonder how many were on my anniversary? Wait! This is great idea for a viz!!!

That was really the inspiration for my viz and I spent my remaining prep time coming up with the best design I could think of that would still be easily reproduced in less than an hour in an unfamiliar environment competing against some of the most talented people in the industry. And so the “UFOs on Your Birthday?” Viz was born and earned the “First Runner Up” status at the Inaugural Facebook Viz Cup.

November 14, 2013

VizCup Roundup–3rd place: Micah Rice, an analysis of natural disasters

This is a guest post by Micah Rice, the third place finisher at the Facebook VizCup. In his day job, Micah is a Strategy Consultant for Wells Fargo.

I was interested in the natural disasters data set for two reasons: 1) because I work in a location analysis group and I wanted to use maps and geo-spatial analysis, and 2) because I am interested in using data to solve big problems that impact people’s lives. The first question I wanted to answer was where in the world disasters happen: most occurrences and most people affected (Dashboard 1). A quick map revealed that China and India have the highest number of events and people impacted. The U.S. has a high number of events, but low population affected.

The second question I had was what type of disasters cause most of the damage and how deadly are they (Dashboard 2). As it turns out, drought affects the most people by far, but extreme temperature kills a much higher proportion of those affected. I plotted this change over time and while the number of events recorded has increased tenfold, the proportion killed has actually decreased over time. That got me thinking about what might be causing the disparities between number of events, people affected, and proportion killed.

I thought a country’s economic ability to deal with a disaster was a good place to start, so I did a quick search and found a GDP by country data set online and created a little interactive toggle to bring in Per Capita GDP cohorts into the analysis. This new data revealed that not only are poorer countries more likely to experience natural disasters, but they see much higher rates of mortality in several disaster categories.

This made me question what could be done about this from here in the Bay Area (Dashboard 3). I plotted the number of disasters and the distance from San Francisco in a few different ways and found that if an aid organization wanted to be well-located in order to respond quickly to these disasters, they would not be located here, but rather somewhere near the Middle East or South Asia.

While this analysis does not solve any real problems, I was very happy to think that good visual analytics might serve to improve the logistics around disaster response, and possibly even inform policy around what factors contribute to the unevenly-concentrated human impact we saw in the data.

November 13, 2013

VizCup roundup–data viz hackers unite!

Last Thursday evening, Facebook hosted 80 people for the VizCup, a data visualization competition. We provided eight data sets two days ahead of time for the participants could choose from. The data ranged from UFO sightings, to natural disasters, to the Premier League, to Foursquare check-ins, to stats about blogs, pages and portals. They also had the option to supplement the data with data of their own, like corn yields to see if there’s a relationship to UFO sightings.

We allowed the participants to self-organize into teams or they could work on their own. In the end, we had 25 individuals or teams present.

Ken Rudin, who leads all of analytics at Facebook, kicked off the evening with a few words about what it means to be an analyst and the future of analytics. Ken was followed by our three awesome judges, who came out to loud cheers and a bit of Crazy Train for intro music. Anya A’hearn of datablick, Drew Skau of Visual.ly and Cole Nussbaumer of storytelling with data served as our judges. Check out Cole’s review of the VizCup. When the hacking began, the judges roamed the room to get a feel for what people were building.

One interesting note was that every participant, except one, chose to use Tableau to build their viz, despite being given the freedom to use whatever tool they wanted. Personally, I think this is for two primary reasons:

- Tableau’s ease of use and the ability for a user to build something meaningful in an hour

- The enthusiasm and passion of the Tableau community. What I mean by that is Tableau’s users look for any excuse to use Tableau on their free time. Mike Evans, our 2nd place finisher, even flew up from LA!! Now that’s passion!

There will be summaries of the top 3 finishers coming soon, written in their own words. But first, here are a few pictures to give you a feel for the atmosphere (thanks to Peter Bickford of Slalom Consulting for many of the pictures). Farther down in this post, you can see some of the entries submitted. We’re super excited to host the event again soon!

November 4, 2013

Data Viz, Facebook, and you. Join our awesome team!

An overview of the role can be found on Facebook Careers.

It's been quite challenging to find strong candidates.

Why don’t people meet the bar?

- Overstating Tableau skills

- Overstating SQL skills

- Too much dependency on tools to do the thinking

- Lack of product sense

- Too much dependency on data engineers

- Strong ability to tell stories with data

- Strong in data visualization

- Competent in SQL

- Be able to demonstrate excellent product sense (i.e., Can you pick up products goals, concepts and requirements quickly?)

- Tool-agnostic critical thinkers

October 29, 2013

Tableau Tip: Change the chart type of a single chart with a parameter

In this example, I'm allowing the user to choose between a bar chart and a line chart via a parameter. First, you have to create the "viz picker" parameter.

Parameters are useless unless you create a calculated field based on them. In this case, I'm going to create two calculated fields:

1. Sales Bar: IIF([Viz Type]='Bar',[Sales],null)

2. Sales Line: IIF([Viz Type]='Line',[Sales],null)

Next, I need to build the viz. I've placed Order Date on the Columns shelf and Sales Bar on the Rows shelf. I then changed the Mark Type to Bar. I've also shown the Viz Type parameter control.

Now place the Sales Line field on the secondary axis of the chart to create a dual axis chart.

Once you drop the Sales Line field on the secondary axis, notice how the bars have changed color and the secondary axis only shows zero.

To fix the axis scale, you need to align the two axes by right-clicking the dual axis and selecting Synchronize Axis.

To fix the color, make sure you have All chosen on the Marks card and remove Measure Names from the Color shelf.

And now you should be back to the blue bars.

The last step is to change the mark type for the secondary axis to a line. Right-click the dual axis, choose Mark Type => Line.

That's it, you're done! Use the Viz Type drop down to change the chart type. Download the workbook here.

October 19, 2013

Are pie charts a good reason to fire your stock broker?

- Added crazy marks to some of the slices (I guess that's because it's in B&W)

- Labeled the slices to two decimals (way too precise for me)

- Didn't sort the slices in descending order

September 27, 2013

Tableau Tip: Creating a chart that only displays the last day of each year, quarter or month

Download the workbook here.

September 18, 2013

Tableau Tip: Sorting an "Other" dimension member at the end of a list

There are times when you would like to sort the Other member at the bottom of your list. For example, you might have a bar chart of sales by subcategory that you want to sort in descending order, but you want to show the individual subcategories followed by Other at the end. Typically, the bar chart would look like this (I'm highlighting other to make it easier to track for this example):

So how can I get Other to be at the bottom of this list? Simple, create a calculated field that changes "Other" to negative for the measure you want to sort by.

Now, right-click on the dimension you want to sort, choose Sort, then in the Sort by section, choose the calculated field you created in the step above and sort in descending order.

And as easy as that, you have Other sorted at the bottom.

Note: This does not work for groups because Tableau does not allow you to leverage groups in calculated fields.